Programmable Interfaces MCP Toolchains

- all

Programmable Interfaces for AI Agents: MCP Toolchains, API Adapters, and Connector Frameworks

Introduction

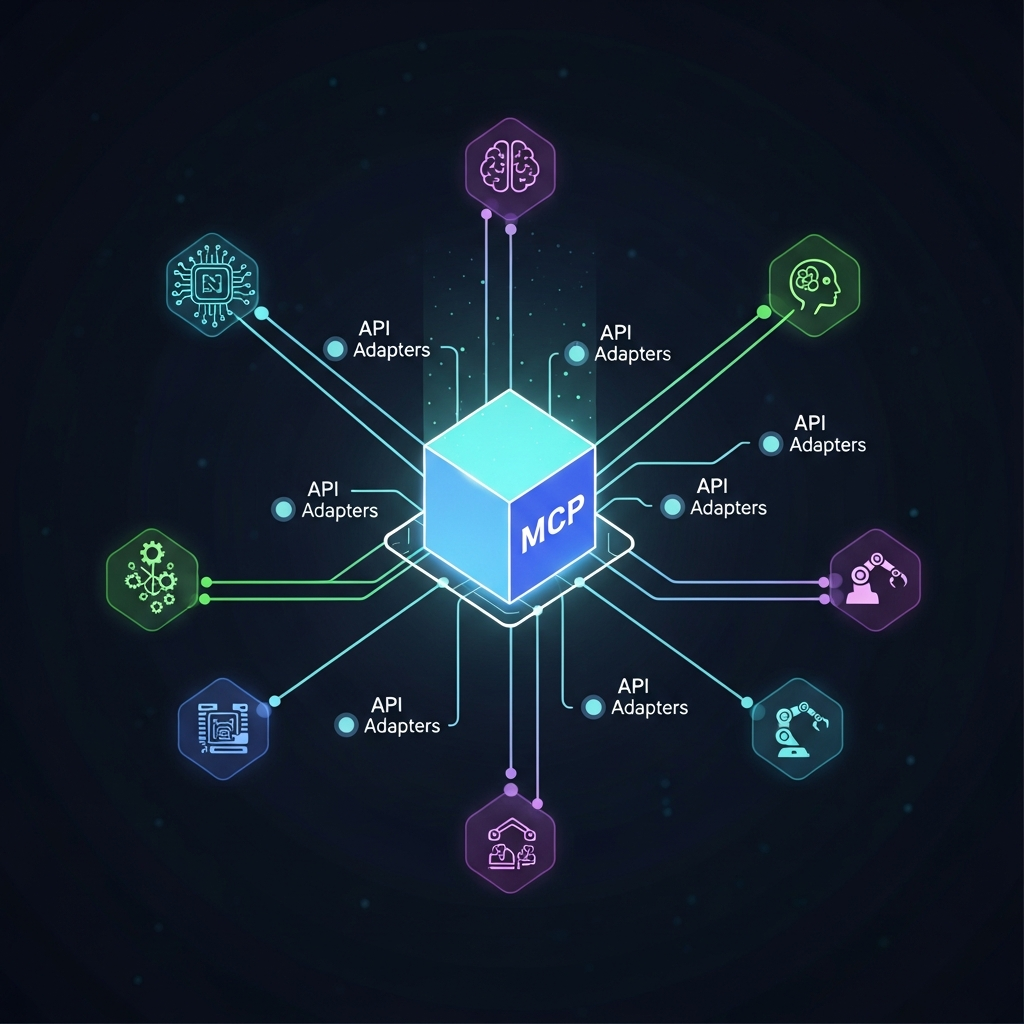

Programmable interfaces for AI agents are reshaping how enterprises connect autonomous decision engines with software systems, devices, and automation platforms. At the core, a well-designed MCP (machine cooperation pattern) toolchain harmonizes the flow of data, commands, and events between agents and endpoints. This article unpacks practical patterns, API adapters, and connector frameworks that teams use to build interoperable, scalable, and secure AI-enabled ecosystems.

For engineering leaders, the objective is not just to connect an AI agent to a single system but to orchestrate a purpose-built toolchain that supports governance, testing, traceability, and continuous improvement. The patterns discussed here apply to scenarios from enterprise resource planning (ERP) to field devices, from CRM systems to industrial controllers. The focus is pragmatic: concrete design decisions, trade-offs, and playbooks you can adapt to real-world programs.

What is an MCP Toolchain?

An MCP toolchain refers to a cohesive set of components that enable multiple AI agents to cooperate with software endpoints in a controlled and repeatable way. The goal is to standardize how agents discover services, negotiate capabilities, exchange data, and handle errors. A mature MCP toolchain emphasizes modularity, observability, and security, so teams can evolve the stack without breaking integrations.

Core components of an MCP toolchain

A practical MCP toolchain typically includes four layers. The first is a discovery layer that surfaces available endpoints, capabilities, and permissions. The second is a capability brokerage that negotiates which agent can call which service under what constraints. The third is a data and command routing layer, ensuring messages are delivered reliably, in order, and with proper security. The fourth is an observability and governance layer that captures metrics, audit trails, and policy enforcement.

Within each layer, engineers implement patterns such as API versioning, fault isolation, and rate limiting. The toolchain also embraces a catalog of adapters and connectors that translate between agent-native formats and the target endpoints’ interfaces. This modular setup helps teams add, remove, or upgrade components with minimal disruption to production systems.

Patterns you’ll encounter in MCP toolchains

- Adapter-first design: Build adapters that translate AI agent intents into service calls and back.

- Capability negotiation: Agents advertise capabilities; the orchestrator selects compatible endpoints to preserve reliability.

- Event-driven communication: Use messaging to decouple agents from endpoints, improving resilience and scalability.

API Adapters for AI Agents

API adapters are the practical glue between AI agents and enterprise endpoints. They encapsulate endpoint quirks, authentication schemes, and data transformations, presenting a consistent interface to agents. A good adapter strategy reduces the cognitive load on AI models and speeds up integration cycles.

Key capabilities of effective adapters

Effective adapters offer authentication support (OAuth, mTLS, API keys), request/response transformation, retries with backoff, and robust error handling. They also implement idempotent operations where possible, so retrying calls does not create duplicate effects. A strong adapter layer includes metadata about rate limits, supported operations, and schema evolution to facilitate governance.

Design patterns for adapters

- Gateway pattern: Centralize cross-cutting concerns like security, logging, and retries at the adapter layer.

- Translator pattern: Convert between agent-facing schemas and endpoint schemas, enabling flexibility as endpoints evolve.

- Back-compatibility strategy: Version adapters gracefully to minimize disruption during endpoint upgrades.

When designing adapters, consider the lifecycle: scaffolding for quick bootstraps, a testing harness that simulates real agent traffic, and observability hooks to track adapter health and latency.

Security and compliance in adapters

Adapters must enforce least privilege, strong authentication, and auditable access controls. Data that traverses adapters may be subject to regulatory requirements; design adapters to support data masking, encryption at rest, and detailed audit logs to satisfy compliance needs.

Connector Frameworks for Enterprise Interoperability

Connector frameworks provide reusable building blocks to connect AI agents with a wide range of endpoints—from databases and ERP systems to IoT devices and cloud services. A strong framework emphasizes discoverability, composability, and governance, enabling teams to assemble integrations like LEGO bricks rather than bespoke one-offs.

Open-source vs. commercial connectors

Open-source connectors offer rapid iteration and community-driven improvements, but may require more governance and risk management. Commercial connectors often come with support, security certifications, and enterprise SLAs. A mature strategy blends both: use proven commercial connectors for core risk areas while leveraging open-source adapters for agility and experimentation.

Catalog-driven governance

Maintain a central catalog of connectors, with metadata about version, compatibility, security posture, and data mappings. A governance layer enforces usage policies, tracks dependency trees, and flags deprecated endpoints before they cause outages. This catalog becomes a living blueprint for multi-product teams that share integrations.

Observability and reliability

Connector frameworks should surface end-to-end visibility across the MCP, including latency budgets, retry counts, and failure domains. Centralized dashboards help operators spot bottlenecks, while automated test suites guard against regression when adapters are updated.

Architecture Options: API-First, Event-Driven, and Data Ops

Choosing the right architecture is about aligning with business goals, data gravity, and security requirements. Each approach has trade-offs in latency, scalability, and operability. In practice, many teams blend patterns to support diverse endpoints and evolving AI workloads.

API-first design

An API-first approach emphasizes stable, well-documented interfaces. Agents interact through clearly defined contracts, enabling parallel development and easier versioning. A mature API-first stack uses REST or gRPC with schema validation, automated tests, and contract testing to prevent surprises during integration.

Event-driven architectures

Event-driven designs decouple producers and consumers, enabling asynchronous processing and high resilience. Message queues and streaming platforms (eg, Kafka, Kinesis) offer durable, ordered delivery of commands and data. For AI agents, events provide a natural mechanism to react to changes in enterprise systems without polling.

Data operations and governance

As AI agents ingest data from multiple sources, a strong data ops discipline is essential. Data lineage, access controls, and data quality checks protect data integrity. Telemetry from data flows informs model monitoring, drift detection, and compliance auditing.

Best Practices: Security, Governance, and Compliance

Security and governance are foundational in enterprise integrations. When AI agents control or influence critical workflows, you must enforce robust security models, continuous risk assessment, and clear ownership of data. The following practices help reduce risk while preserving agility.

Security by design

Embed authentication, authorization, and encryption into every layer. Use mutual TLS for service-to-service calls, rotate credentials regularly, and apply least-privilege access to adapters and endpoints. Automated security testing, including threat modeling, should be part of every release cycle.

Compliance and data governance

Map data flows to applicable regulations (GDPR, HIPAA, etc.) and implement data minimization strategies. Maintain data retention policies, consent management, and auditable logs. Build in privacy-preserving techniques where possible, such as data anonymization for analytics training data.

Quality, testing, and reliability

Institute end-to-end testing that covers adapters, connectors, and agent behavior. Use synthetic data for testing to avoid exposing live systems during development. Employ chaos engineering to validate resilience, and define escalation paths for incident response.

Practical Integration Playbook: Step-by-Step

Below is a pragmatic sequence for teams starting a programmable interfaces for AI agents initiative. Tailor the steps to your organization’s risk tolerance, vendor governance, and product roadmap.

- Define goals and success metrics for the MCP toolchain, including latency, reliability, and security targets.

- Inventory endpoints and establish a preliminary adapter catalog with mapping rules and authentication requirements.

- Design a lightweight discovery service to surface capabilities and ensure agents can locate endpoints dynamically.

- Implement the first adapters for high-value endpoints, starting with read/write operations that have clear Idempotent behavior.

- Deploy an event-driven backbone for asynchronous communications and decoupled processing.

- Establish a governance layer with versioning, access controls, and auditing across adapters and connectors.

- Introduce observability: metrics, traces, and logs that tie back to business outcomes.

- Iterate with pilots, validate ROI, and scale by adding more endpoints and agents.

Real-World Patterns and Case Studies

Organizations use MCP toolchains to enable AI agents to perform orchestrated tasks across systems. A common pattern is a central orchestration layer that routes intents to the most appropriate adapter, with a fallback to a secondary path if a service is unavailable. In addition, teams often employ model-driven routing where simple intents go to lightweight adapters while complex workflows invoke richer microservices.

In practice, this means a fintech product can route an AI-initiated transaction through an identity and risk check, an accounting ledger, and finally a payment gateway, all while capturing an end-to-end audit trail. A healthcare platform might route alerts from an AI clinician assistant to an EHR system, a compliance repository, and a notification service for clinicians, again with end-to-end traceability.

These patterns are not hypothetical. They reflect real-world needs: reliable cross-system automation, secure data handling, and the ability to evolve the agent capabilities without rearchitecting every integration from scratch.

Evaluation and ROI

When assessing programmable interfaces for AI agents, measure both technical health and business impact. Technical metrics include adapter latency, error rate, throughput, and time-to-restore after failures. Business metrics focus on faster feature delivery, reduced integration maintenance, and improved accuracy or efficiency of autonomous tasks.

ROI drivers

- Faster time-to-market for new agent-enabled features due to reusable adapters.

- Lower total cost of ownership through centralized governance and standardized connectors.

- Improved reliability and auditability, enabling safer use of AI in regulated environments.

Track ROI with a simple framework: define the value hypothesis, monitor leading indicators (latency, success rate), and quantify realized benefits after each release. Use these insights to refine adapters, improve governance, and justify further investments.

Getting Started: A Quick Maturity Path

Organizations begin with a focused pilot, selecting a small set of high-value endpoints and a few AI agents. As the pilot matures, extend the catalog, formalize governance, and scale the architecture. A staged approach reduces risk while delivering observable value early.

8-step starter plan

- Align on a measurable objective for the MCP toolchain.

- Map the initial set of endpoints and agents to target adapters.

- Build a minimal discovery and governance layer for visibility and control.

- Create adapters for core actions with solid error handling.

- Introduce event-driven primitives to decouple agents from endpoints.

- Implement security controls and data governance basics.

- Instrument observability to track performance and business impact.

- Scale to additional endpoints and agents based on lessons learned.

Throughout this journey, maintain a clear documentation layer and a living catalog of connectors. This foundation helps teams onboard faster, governance teams stay aligned, and engineers avoid brittle, one-off integrations.