Deployment Path: Validate Signals, Build Observability, Integrate Workflows

- all

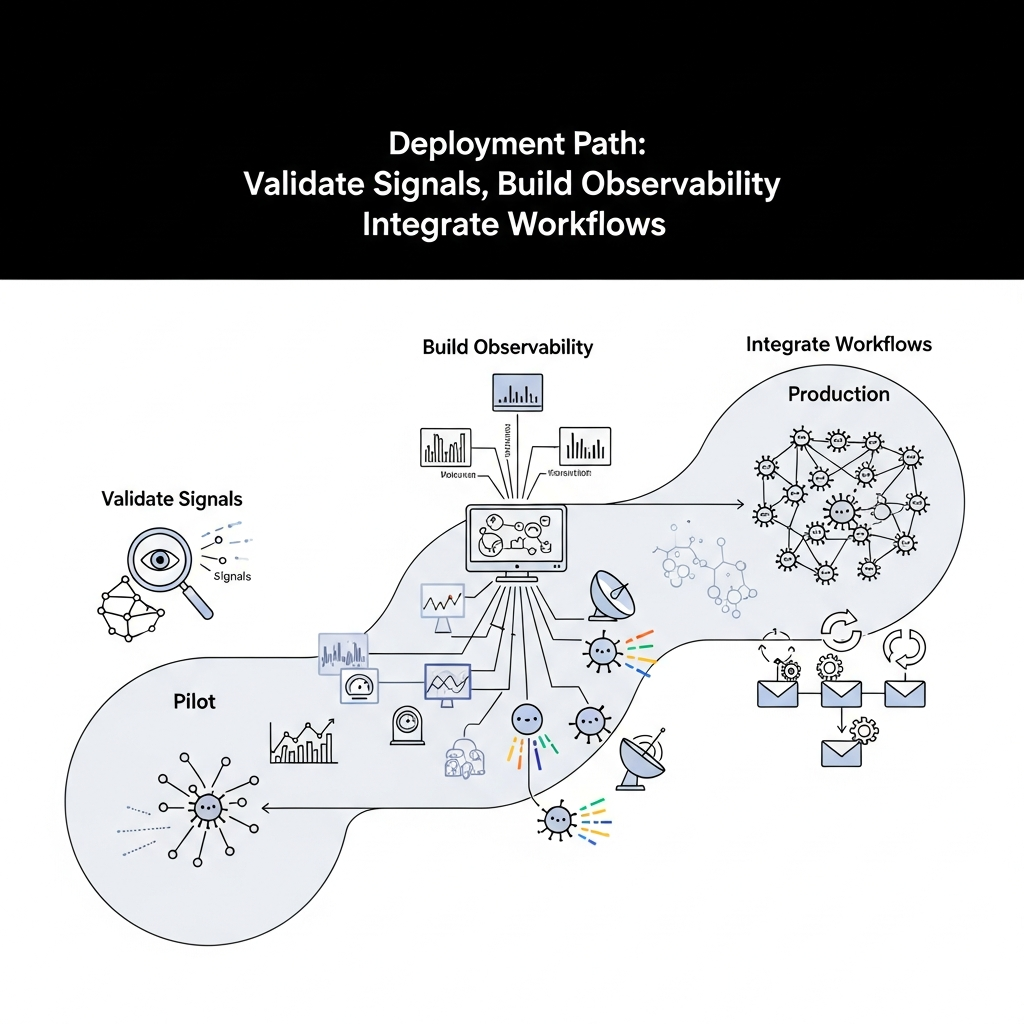

Deployment Path: Validate Signals, Build Observability, Integrate Workflows

Stage 1 — Validate Signals

An agentic deployment starts with well defined signals that guide autonomous behavior. Signals should be observable, actionable, and tied to real business outcomes. Before scaling to production, teams must formalize what success looks like, how signals are measured, and how they influence agent decisions.

Begin with a signal catalog that categorizes inputs the agent will consider, such as accuracy, latency, confidence, risk indicators, and user impact. Each signal should have a clear acceptance criterion and a fallback plan if the signal proves unreliable. Design experiments that validate the signal in a controlled PoC environment and translate those findings into production guardrails.

Example signals in a customer support agent scenario might include response accuracy, time to first meaningful answer, escalation rate, and user satisfaction. For each signal, specify the data sources, collection frequency, and the threshold that shifts behavior from autonomous to human-in-the-loop control. Build in traceability so you can audit decisions and improve signals over time.

Practical steps to validate signals:

- Define a signal glossary with owner, data source, and calculation method.

- Instrument data collection at the source system level and through the agent itself.

- Run parallel tracks where autonomous actions and human oversight are both visible to assess performance.

- Set go/no-go criteria for each signal before promotion to production.

Frameworks for signal validation include a two‑tier approach: a proof of concept that proves the signal works under ideal conditions, followed by a controlled production pilot where real users and edge cases are observed. This staged approach reduces risk and provides a defensible route to scale.

Stage 2 — Build Observability for Autonomous Features

Observability is the backbone of reliable autonomous systems. It enables you to understand what the agent did, why it did it, and when things go wrong. A robust observability stack combines metrics, logs, and traces to provide a unified view of system health, decision quality, and user impact.

Key components include instrumentation of signals, continuous collection of telemetry, and dashboards that reflect both technical health and business outcomes. Adopt an open, standard approach such as a telemetry pipeline that supports structured events, correlation identifiers, and consistent naming conventions across services and agents.

Best practices for observability:

- Expose SLOs and SLIs for autonomous behaviors, not just system uptime.

- Instrument decisions with context: why a decision was made, what data was used, and what risk was accepted.

- Use tracing to map end-to-end flows across services, plugins, and external APIs.

- Automate anomaly detection and alerting with clear escalation paths and defined error budgets.

Illustrative architecture patterns for observability include a centralized telemetry collector, a metrics store, a log/indexing system, and a visualization layer. This setup supports proactive risk mitigation, rapid diagnosis, and continuous improvement of autonomous features.

Stage 3 — Integrate Workflows with Agents

Autonomous features rarely operate in isolation. They must seamlessly plug into existing workflows, data pipelines, and user journeys. Integration patterns should emphasize reliability, security, and graceful degradation when autonomy cannot perform as expected.

Three common integration patterns are:

- Event driven orchestration: agents respond to domain events and publish follow‑up events, enabling decoupled, scalable workflows.

- API first orchestration: agents expose stable APIs that external systems rely on, with versioned contracts and idempotent operations.

- Workflow engine integration: agents participate in long‑running processes managed by a workflow engine, including compensation actions for errors.

Security and data governance must be baked into integration. Enforce least privilege, audit trails, and data minimization. Implement idempotent operations to avoid duplicate actions in retry scenarios and design robust fallback strategies that involve human oversight when confidence is low.

When wiring workflows, document interaction points, data schemas, and error handling semantics. A clear integration specification reduces handoff friction and accelerates the path to production readiness.

Stage 4 — Production Metrics for Autonomy

Measuring autonomous performance goes beyond traditional throughput metrics. A production metric strategy should capture both technical health and business value. Define a small, targeted set of metrics that can evolve as maturity grows.

Recommended production metrics include:

- Autonomy readiness score: a composite index combining signal validity, decision confidence, and observed risk.

- Decision latency and round‑trip time: how long the agent takes to act, including any human in the loop.

- Success rate of autonomous decisions: percentage of actions completed without escalation.

- Fallback rate and escalation latency: how often human intervention occurs and how quickly it happens.

- User impact metrics: satisfaction, retention, activation, and conversion linked to autonomous actions.

- Quality of data used for decisions: timeliness, completeness, and bias indicators.

Translate these telemetry insights into governance decisions. Establish an error budget for autonomous components and plan for gradual rollouts with controlled exposure. Tie production metrics to business outcomes so leadership can see value and risk in concrete terms.

Stage 5 — Architecture Options and Roadmap Patterns

There is no one size fits all for agentic deployments. The right architecture depends on data sensitivity, regulatory constraints, latency requirements, and the ability to operate at scale. A practical roadmap often follows a modular, API driven approach with an emphasis on observability and governance.

Three core architectural layers commonly emerge:

- Signal and decision layer: components that generate signals, evaluate confidence, and trigger actions.

- Orchestration and integration layer: event buses, adapters, and workflow engines that connect agents to systems and processes.

- Observability and governance layer: telemetry, dashboards, and policy controls that ensure safety and compliance.

Deployment options range from fully cloud native microservices with managed observability to hybrid models that keep sensitive components on-premises. Consider API first design, feature flags for controlled rollouts, and modular services that enable incremental autonomy without rewriting core systems. A thoughtful roadmap aligns milestones with business goals and regulatory requirements while maintaining a clear path to scale.

Practical roadmaps often look like this:

- Phase 1: PoC to pilot with a narrow domain of signals and a minimal autonomy surface.

- Phase 2: Expand signals, improve observability, and establish cross‑service integrations.

- Phase 3: Introduce governance, compliance checks, and human in the loop for high‑risk decisions.

- Phase 4: Scale across domains and increase autonomous decision boundaries with robust monitoring.

Stage 6 — Playbooks, Checklists, and Templates

Operational playbooks translate theory into repeatable action. Use lightweight templates that teams can customize for their domain and risk profile. A few starter playbooks include the Signal Validation Playbook, the Observability Readiness Checklist, and the Autonomous Workflows Integration Guide.

Signal Validation Playbook highlights:

- Objective and success criteria for each signal

- Data source and data quality checks

- Experiment design and rollback plan

- Approval gates for moving signals into production

Observability Readiness Checklist covers:

- Telemetry instrumented across all components

- SLOs with measurable targets

- Alerting, runbooks, and on-call procedures

- Dashboards that tie technical health to business impact

Autonomous Workflows Integration Guide includes:

- Interface contracts and idempotency guarantees

- Security and data governance requirements

- Error handling and compensation paths

- Change management and versioning strategy

Stage 7 — Common Pitfalls and Risk Mitigation

Autonomy introduces new failure modes. Common pitfalls include overloading the system with signals, brittle integrations, drift in decision quality, and insufficient governance. Proactive risk management reduces the chance of regressions after deployment.

Risk mitigation strategies:

- Limit autonomous scope with progressive rollouts and feature flags

- Build strong data governance and privacy controls from day one

- Implement continuous testing with production-like data and simulated edge cases

- Design for safe degradation and clear human in the loop when needed

- Regularly review signal validity and adjust thresholds as data matures

Security and compliance considerations should be woven into the architecture from the start. Maintain auditable trails for decisions and data used, and enforce access controls that align with organizational risk tolerance.

Stage 8 — Next Steps and Practical Action

Embarking on an agentic deployment requires a disciplined, measurable approach. Start with a two to four week discovery sprint to map signals, identify data sources, and draft a minimal observability plan. From there, define a phased rollout that gradually expands autonomy while maintaining safety nets and clear governance.

Key actions to begin today:

- Assemble a cross functional team with product, data, security, and platform owners

- Create a concise signal catalog and a baseline telemetry plan

- Draft an autonomy readiness score and target SLIs/SLOs

- Choose a modular architecture pattern that supports incremental autonomy

- Define a review cadence for signals, dashboards, and integration points

If you are evaluating partners to accelerate this journey, start with a structured RFP that asks for governance capabilities, observability maturity, and a clear path to ROI. Look for teams with proven experience in end-to-end product engineering, security compliance, and scalable offshore delivery models. The path to production should feel deliberate, testable, and auditable from day one.