Autonomous Agents vs Traditional Automation: A Practical Comparison for Product Managers

- all

Autonomous Agents vs Traditional Automation: A Practical Comparison for Product Managers

Real PM Challenge: Scaling features without increasing complexity or team size

Imagine a growing SaaS product with a onboarding flow that needs to adapt to 10x the user base within 12 months. The goals are clear: reduce time to value, improve activation rates, and maintain a high-quality user experience. Adding headcount to build bespoke automation for every new feature is unlikely to scale. The PM team needs a pattern to automate decision-making, workload assignment, and user interactions—without bloating the roadmap or introducing fragile handoffs between teams.

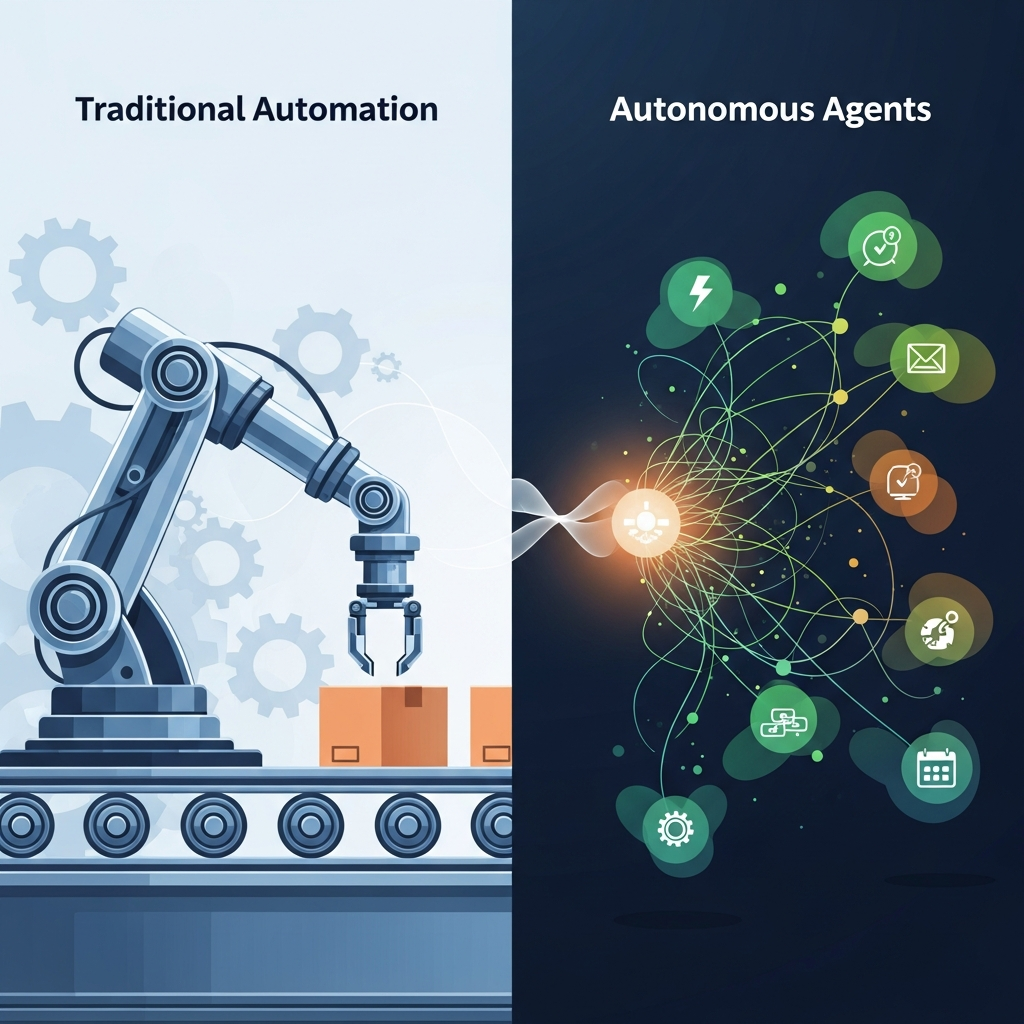

In practice, teams often start with rule-based automation: a simple trigger, a single action, and a scripted outcome. But as product scope expands—multiple onboarding screens, personalized nudges, multi-channel notifications, and evolving retention experiments—the complexity grows faster than the team can manage. This is where the choice between traditional automation and autonomous agents becomes consequential, not cosmetic.

This article helps product managers compare the traditional automation approach with autonomous agents in a way that’s concrete, actionable, and aligned with product roadmaps. It treats agentic ai as a strategic evolution rather than a buzzword, with clear trade-offs, guardrails, and a practical decision framework.

Traditional automation in product workflows

Rules and triggers

Traditional automation generally relies on explicit rules and event-driven triggers. When a predefined condition is met, a predetermined action is executed. Examples include: a welcome email when a new user signs up, a reminder notification if a user hasn’t completed a setup step, or a churn-risk alert triggered by inactivity thresholds.

These rules are usually encoded in a workflow engine or a rule-based service. They excel at repeatable, well-scoped tasks with clear inputs and outputs. They are predictable when the inputs stay within known patterns.

Orchestration and architecture

Traditional automation often relies on orchestrated microservices or a centralized automation layer. It emphasizes determinism, reliability, and auditability. The architecture favors isolated decision points and explicit handoffs between components, with logs and retry policies to handle failures.

Limitations and failure modes

Limitations emerge as the product grows in scope. Rules can become brittle when inputs shift, edge cases appear, or the user context changes. Managing exceptions requires more rules, increasing maintenance cost. There’s also a tendency toward siloed automation that doesn’t adapt to new workflows without explicit reconfiguration. Finally, complex multi-step decision-making across channels (in-app, email, SMS) can lead to inconsistent user experiences if not carefully orchestrated.

In short, traditional automation is excellent for well-defined tasks, but it struggles with ambiguity, cross-functional decision-making, and evolving product strategies.

Autonomous Agents in a Product Context

Goal-driven behavior

Autonomous agents operate with goals set by the product team and autonomously plan actions to achieve those goals. They use context signals, data, and constraints to select actions that align with the objective. For example, an agent might decide to enroll a user in a contextual onboarding path, escalate a support ticket, or tailor a notification timing based on observed behavior.

Decision loops

Agentic systems continuously loop through sensing, reasoning, acting, and learning. They can re-evaluate decisions as new data arrives, enabling dynamic adaptation to changes in user behavior, market conditions, or product experiments. This loop reduces manual reconfiguration and enables faster iteration on features that influence retention and engagement.

Adaptability and governance

Agentic ai workflow patterns balance autonomy with guardrails. They can respect policies, privacy constraints, and compliance requirements while still pursuing high-value outcomes. For enterprises, agents can operate within predefined governance boundaries, with human-in-the-loop checks for high-risk decisions.

The term agentic ai systems captures the idea that product teams aren’t just coding a sequence of steps; they’re shaping a system that can navigate uncertainty, adjust to user context, and optimize for business outcomes with minimal manual reconfiguration.

When designed well, agentic approaches can deliver benefits such as faster experimentation cycles, better contextual relevance, and improved cross-channel coordination without a proportional increase in team size.

Side-by-Side Comparison

| Dimension | Traditional Automation | Autonomous Agents |

|---|---|---|

| Flexibility | Predictable, rule-bound; changes require reconfiguring rules; limited ability to improvise beyond defined paths. | Goal-driven and context-aware; can reinterpret goals as inputs change; can replan actions without manual rule updates. |

| User Experience | Consistent within defined flows but brittle when contexts shift; channel coordination requires explicit wiring. | Adaptive interactions across in-app and external channels; can personalize timing and content on the fly while maintaining guardrails. |

| Scalability | Scales with more rules; maintenance grows with the number of edge cases; complexity can explode. | Scales by learning and re-planning; reduces manual rule growth; architecture supports cross-product workflows and multi-domain decisions. |

| Maintenance Cost | High, due to rule proliferation and exception handling; requires ongoing rule auditing and versioning. | Moderate to high upfront for architectural design; lower long-term maintenance as agents adapt; governance controls remain essential. |

| Risk and Control | Predictable risks tied to rules; hard to anticipate unforeseen edge cases; audit trails exist but can be complex. | Guardrails and human-in-the-loop safety are critical; risk can be managed with policy constraints, explainability, and monitoring. |

The table highlights that traditional automation excels in stability for well-defined tasks, while autonomous agents offer adaptability and cross-channel coordination essential for growth at scale.

PM Dimensions: Flexibility, User Experience, Scalability, Maintenance Cost, Risk

Flexibility

Traditional automation is strong when the problem space is narrow and well-understood. Autonomous agents shine when the problem space is broad, ambiguous, or evolving, allowing teams to pivot priorities without rewriting large swaths of logic.

User Experience

Rule-based flows deliver predictable interactions. Agentic patterns tailor experiences based on user context, enabling dynamic onboarding prompts, adaptive notifications, and proactive support that feels timely and relevant.

Scalability

Automation scales by adding more rules and state machines, which can become unwieldy. Agents scale by leveraging context and goals to coordinate across features and channels with fewer manual rule augmentations.

Maintenance Cost

Rule-heavy systems demand ongoing rule curation and testing. Agentic systems incur upfront architectural work and governance, but can reduce rule churn and rework over time.

Risk and Control

Automation risk is tied to edge cases in rules. Agentic approaches require robust guardrails, monitoring, and human oversight for high-risk decisions, but offer clearer pathways to auditing decisions through logs and policy constraints.

Real SaaS Scenarios: Onboarding, Notifications, Retention, Support Flows

Onboarding and Activation

Traditional automation might sequence emails and in-app prompts based on sign-up events. An autonomous agent could interpret user signals (feature exploration pace, time since last action, device usage) and adapt onboarding paths in real time, guiding users toward completion with personalized milestones.

Notifications and Engagement

Rule-based notifications fire at fixed times. An agent-driven approach analyzes micro-behaviors, platform load, and user preferences to optimize notification timing and content to maximize opens and conversions across channels.

Retention and Reactivation

Rules may trigger re-engagement messages after inactivity. An autonomous agent might diagnose if a user context is genuinely dormant or just temporarily busy, then choose an appropriate re-engagement strategy that aligns with the user’s goals and product stage.

Support Flows

Rules route tickets to the right queue. An agent can triage, escalate, or resolve requests by evaluating sentiment, urgency, and history, orchestrating cross-team handoffs if needed while maintaining a transparent audit trail.

When Automation is Sufficient and When Autonomous Agents are Better

Use traditional automation when the problem is well-scoped, inputs are predictable, and outcomes are clearly defined. Examples include simple onboarding steps, status-based messaging, and basic reminders.

Opt for autonomous agents when the problem space includes ambiguity, cross-channel coordination, and rapid experimentation. Agentic approaches excel in dynamic onboarding, personalized engagement, adaptive support, and situations where the product strategy evolves quickly.

In practice, many teams start with automation for stability, then layer autonomous agents to handle edge cases, optimize interactions, and accelerate experiments. A staged adoption helps maintain governance while extracting incremental value.

Risks, Guardrails & Governance for PMs

- Data privacy and compliance: ensure context collection and actions obey privacy rules, especially in regulated industries.

- Human-in-the-loop for high-risk decisions: keep a review path for critical user journeys and sensitive data changes.

- Explainability and auditability: maintain logs of agent decisions and provide rationale where feasible.

- Security and integrity: design defenses against manipulation of decision loops and data poisoning.

- Governance and policy boundaries: codify constraints, approvals, and monitoring dashboards for governance teams.

- Vendor risk and integration: ensure partner systems meet security and reliability standards; establish clear SLAs.

Governance is not a blocker; it is the enabler of scalable autonomy. Clear guardrails let teams experiment with ambitious product ideas while preserving customer trust.

Practical Decision Framework & Checklist for PMs

- Define the core goal and success metrics for the feature or workflow.

- Map inputs, signals, and decision points across channels and teams.

- Assess data readiness, privacy constraints, and governance requirements.

- Evaluate whether outcomes are deterministic enough for automation or require adaptive decision-making.

- Choose an approach: start with automation for stability, add autonomous agents for adaptability, or blend both where appropriate.

- Plan a pilot with clear success criteria and a short horizon for learning.

- Define guardrails, human-in-the-loop points, and monitoring dashboards.

- Establish metrics for ROI, activation, retention, and support efficiency.

- Document an integration roadmap: data sources, APIs, and system owners.

- Iterate publicly: share results with stakeholders and adjust the roadmap accordingly.

Checklist takeaway: align product goals with capability fit, test early, govern strictly, and measure impact relentlessly.

Closing Thoughts

Autonomous agents and traditional automation each serve a purpose in product leadership. The most successful PMs view agentic ai as a strategic evolution that complements and extends what automation can achieve. The right approach depends on the problem space, data readiness, risk tolerance, and the speed of learning required to reach product-market fit.

By applying a practical decision framework, product managers can design roadmaps that balance stability and experimentation, ensure governance, and deliver measurable improvements in onboarding, engagement, and support experiences. The result is a scalable product organization that can adapt to changing user needs without a linear increase in headcount.